Abstract

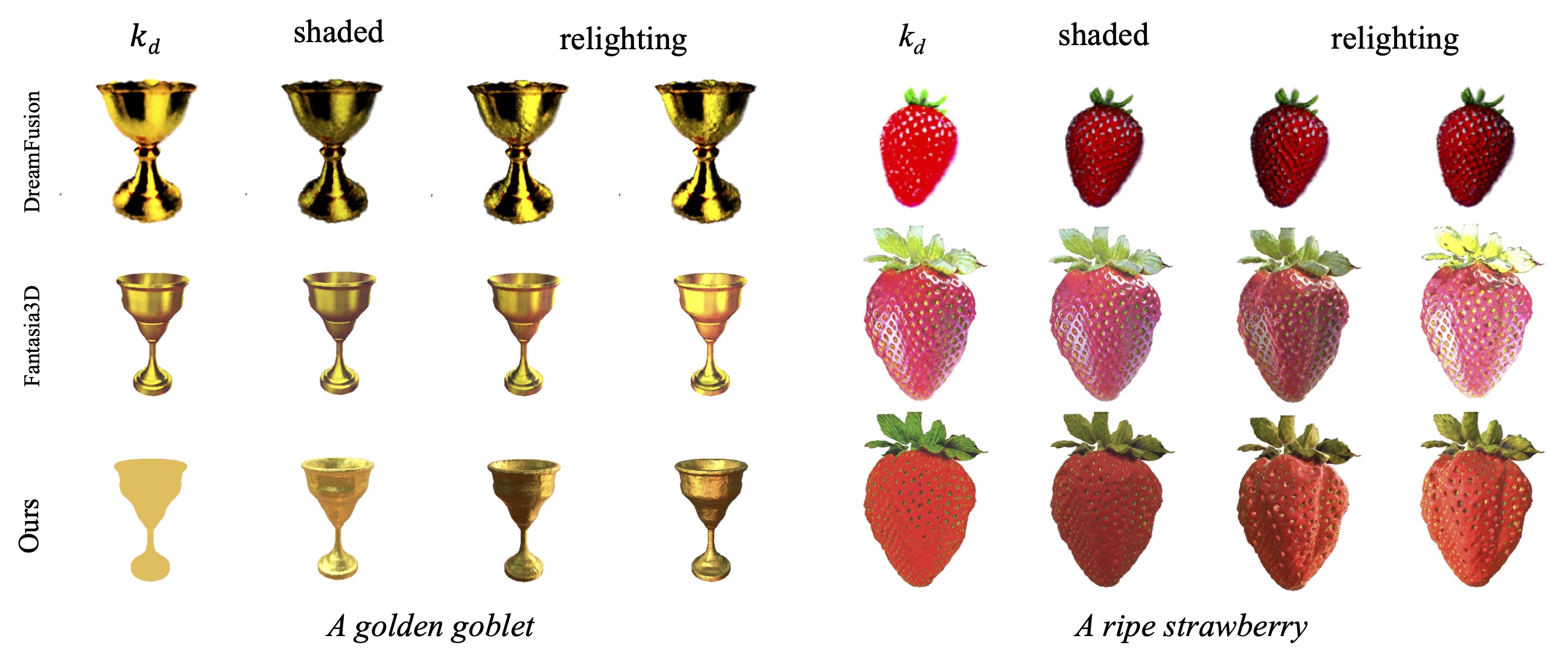

Based on powerful text-to-image diffusion models, text-to-3D generation has made significant progress in generating compelling geometry and appearance. However, existing methods still struggle to recover high-fidelity object materials, either only considering Lambertian reflectance, or failing to disentangle BRDF materials from the environment lights. In this work, we propose Material-Aware Text-to-3D via LAtent BRDF auto-EncodeR (MATLABER) that leverages a novel latent BRDF auto-encoder for material generation. We train this auto-encoder with large-scale real-world BRDF collections and ensure the smoothness of its latent space, which implicitly acts as a natural distribution of materials. During appearance modeling in text-to-3D generation, the latent BRDF embeddings, rather than BRDF parameters, are predicted via a material network. Through exhaustive experiments, our approach demonstrates the superiority over existing methods in generating realistic and coherent object materials. Moreover, high-quality materials naturally enable multiple downstream tasks such as relighting and material editing.

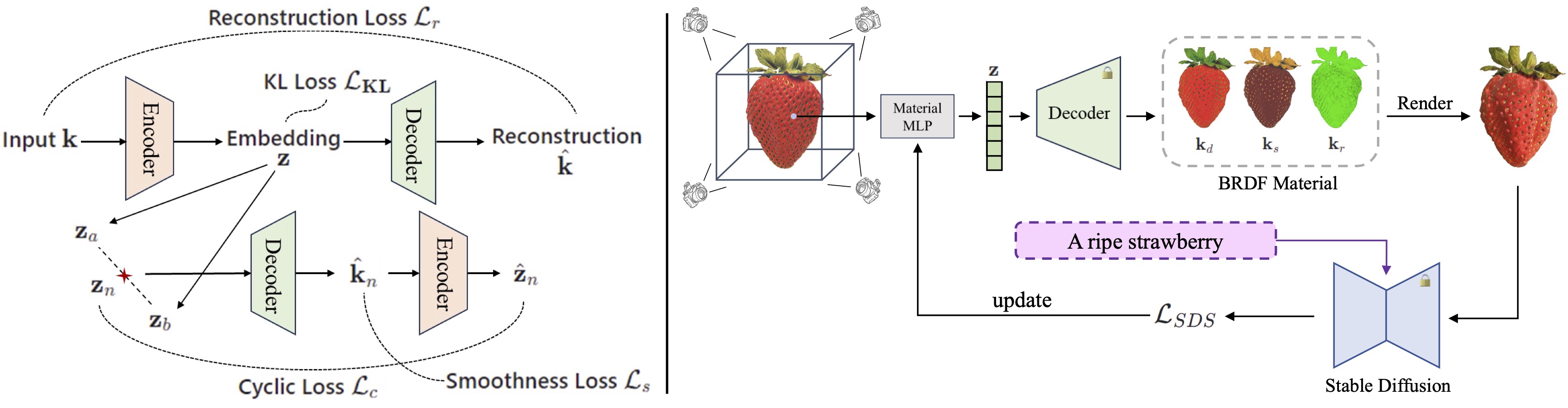

Method Overview

Left: Our latent BRDF auto-encoder is trained on the TwoShotBRDF dataset with four losses, i.e., reconstruction loss, KL divergence loss, smoothness loss, and cyclic loss. Imposing KL and smoothness loss on latent embeddings encourages a smooth latent space.

Right: Instead of predicting BRDF materials directly, we leverage a material MLP γ to generate latent BRDF code z, which is then decoded to 7-dim BRDF parameters via our pretrained decoder. Similar to prior works, the SDS loss can be applied to the rendered images, which empowers the training of our material MLP network. (Note that, roughness kr is scalar and we visualize it with the green channel here.)

Results

|

|

|

|

| A sliced loaf of fresh bread | A plate piled high with chocolate chip cookies | ||

|

|

|

|

| A 3D model of an adorable cottage with a thatched roof | A delicious croissant | ||

|

|

|

|

| A DSLR photo of a hamburger | An ice cream sundae | ||

|

|

|

|

| A pineapple | A rabbit, animated movie character, high detail 3d model | ||

|

|

|

|

| A car made out of sushi | A blue tulip | ||

The gallery of our text-to-3D results. Shapes, normal maps, and shaded images from two random viewpoints are presented.

|

|

|

|

Relighting results. Our generated 3D assets are relit under a rotating environment light.

|

|

|

|

|

|

|

|

|

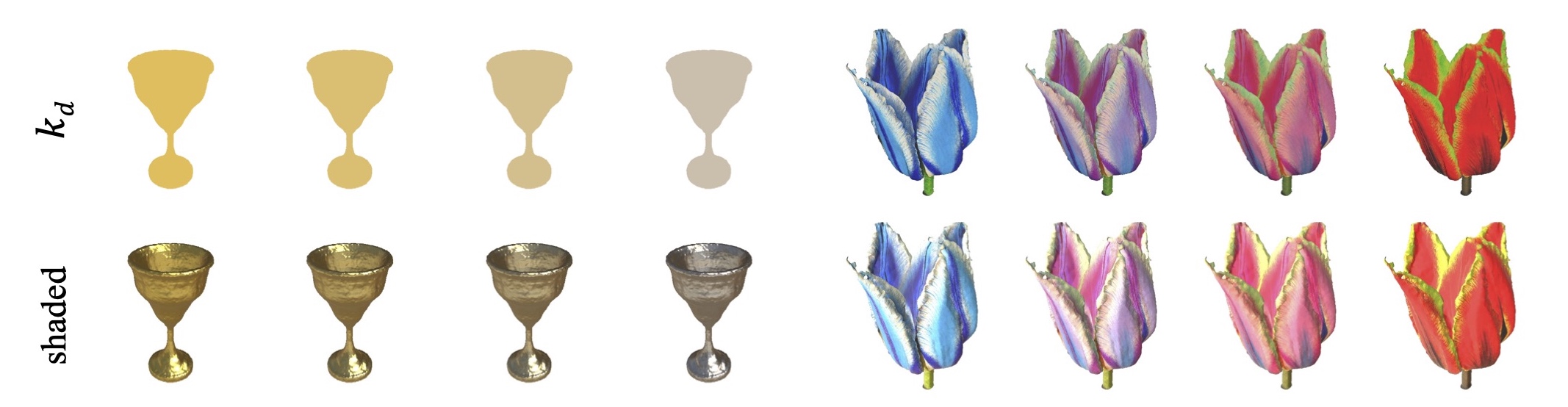

Material interpolation. Thanks to the smooth latent space of BRDF auto-encoder, we can conduct a linear interpolation on the BRDF embeddings. As shown, a golden goblet will turn into a silver goblet while the color of the tulip changes from blue to red gradually.

Related Links

There are a lot of excellent works that are related to MATLABER.

DreamFusion: Text-to-3D using 2D Diffusion

Score Jacobian Chaining: Lifting Pretrained 2D Diffusion Models for 3D Generation

Magic3D: High-Resolution Text-to-3D Content Creation

Fantasia3D: Disentangling Geometry and Appearance for High-quality Text-to-3D Content Creation

Latent-NeRF for Shape-Guided Generation of 3D Shapes and Textures

ProlificDreamer: High-Fidelity and Diverse Text-to-3D Generation with Variational Score Distillation

BibTeX

@article{xu2023matlaber,

title={MATLABER: Material-Aware Text-to-3D via LAtent BRDF auto-EncodeR},

author={Xu, Xudong and Lyu, Zhaoyang and Pan, Xingang and Dai, Bo},

journal={arXiv preprint arXiv:2308.09278},

year={2023}

}